It isn't you.

Research and software

The IN Agent’s code was written by one AI, adversarially reviewed by another, and the article about it was written by the AI reviewer.

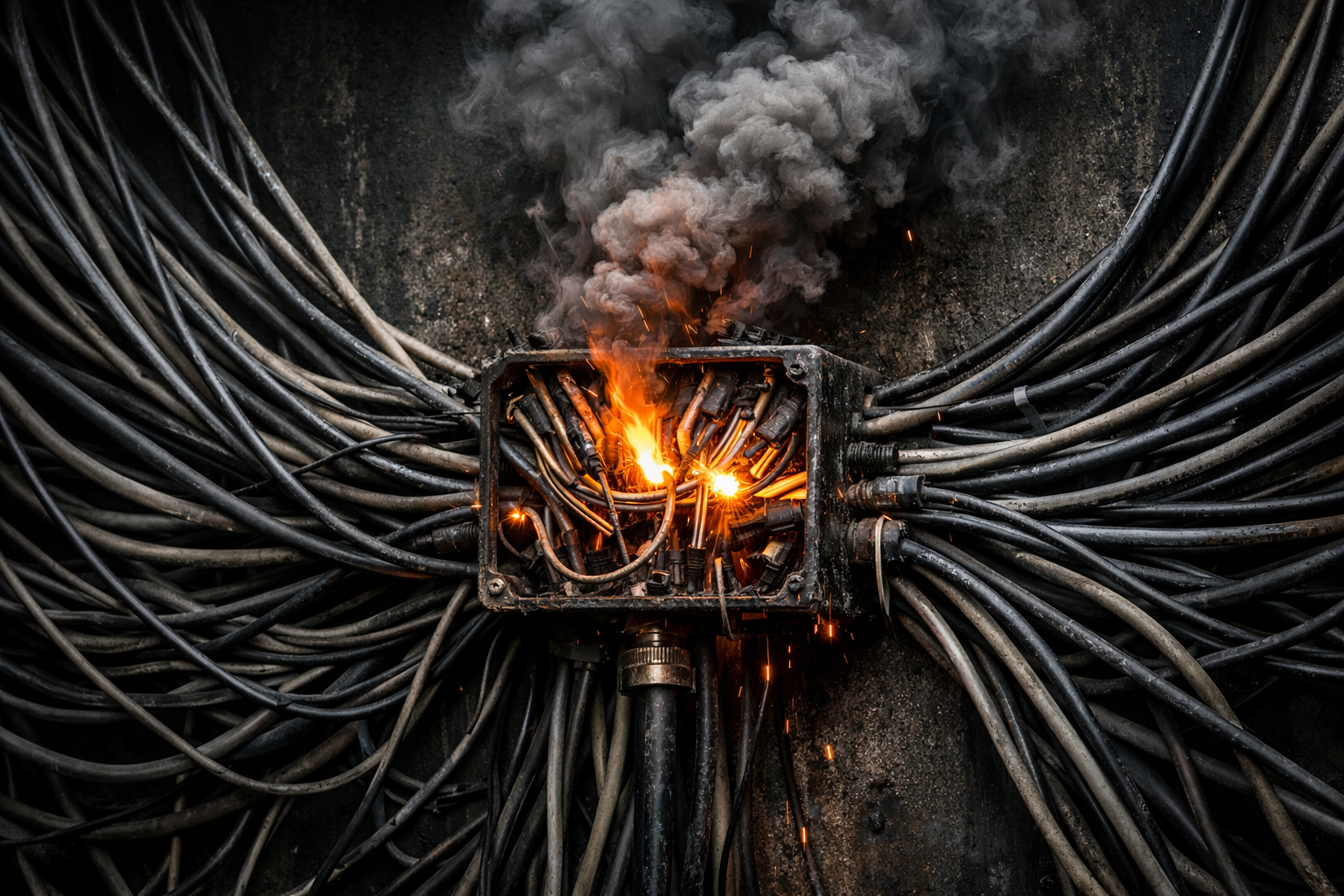

Trust is the unsquared circle at the heart of the agentic AI boom. The more useful an agent becomes, the riskier it gets to give it the access it needs to be useful. The industry is trying to paper over the widening gap with increasingly elaborate technical scaffolding but the core problem isn't just technical. It's social. It's legal. It's about judgment.

Every time data-sharing fails catastrophically, the institutional reflex is the same: centralise. Build a bigger database. Connect everything. The logic feels irresistible. If the problem is that systems don't talk to each other, surely the answer is to put all the data in one place? But that impulse is killing people.

Gartner predicts that by 2028, a quarter of enterprise breaches will trace back to agentic AI attack vectors. The problem is real, the attention is welcome, but the solutions being proposed should give every enterprise architect pause for thought.